February 2020 Update

Hey everyone, Ryan here. It’s been a while since my last update and there’s a lot to discuss, so apologies in advance if this runs a bit long. We’ve spent the last few months working on some big changes internally to allow us to roll out new features faster, reduce bugs/regressions, and improve the overall quality of Arsenal across the 100+ cameras we support. Before we get to that, I wanted to touch on the headline features for the next Arsenal release.

The New Release (0.9.94)

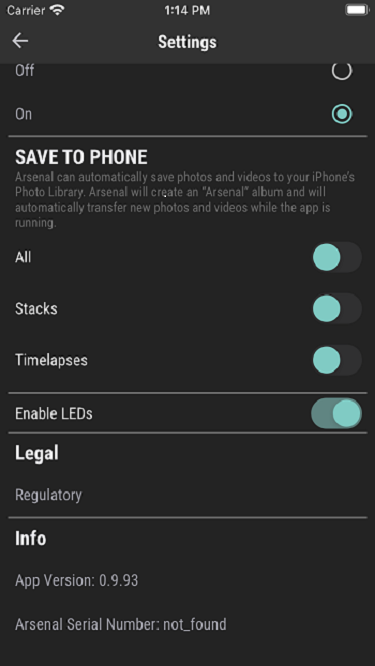

Photos can now be automatically saved to the phone!

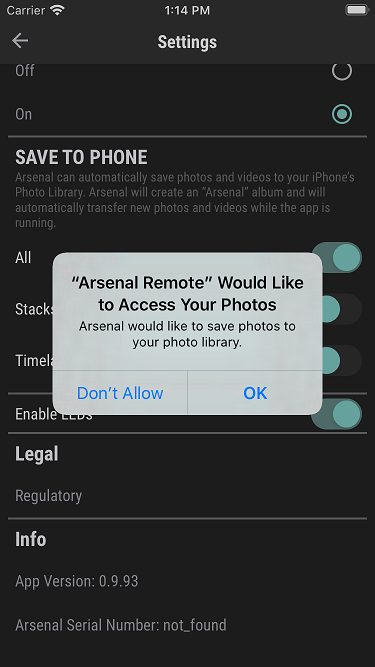

This was an often requested feature that ended up being more work than you might expect. Under Settings there are now sliders for saving "All", "Stacks", or "Timelapses". Arsenal will automatically create an "Arsenal" album which photos, stacks, or timelapses will be placed in. By default ‘All’ will be selected. In addition, when saving files there's also a status indicator in the gallery page in the Arsenal mobile app that shows how many files are left to be synced.

The Arsenal app can transfer photos while in the background, but iOS 13 and recent Android versions limit the amount of time an app can be active for background transferring. This means that if you background the Arsenal app before the sync is complete, it may not finish syncing all photos. It’s best to ensure the sync status indicator shows the sync is complete before backgrounding or exiting the app. If for some reason you leave the app and it never completed the sync, next time you open the app it will pick up where it left off.

Performance Improvements

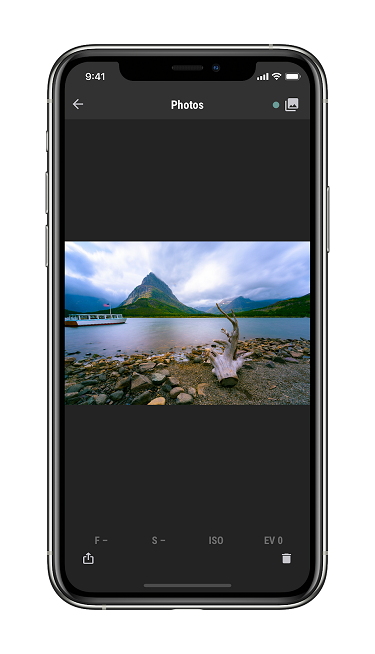

We’ve got quite a few performance improvements in this release (more in the works also). The big improvements relate to the gallery and photo loading. For example, photo processing priority is now bumped up based on which photo you are looking at in the gallery. This should be a nice user experience improvement.

Exposure Bracketing

You can now do exposure stacks in shutter priority and manual mode on the camera.

Bug Fixes

Like usual, there’s quite a few bug fixes in this release. We’ll put them all in the release notes, but a few of note:

- Added a work around for the iOS 13 "Code 8" issue

- We think we fixed the issue that caused the accelerometer orientation to be pulled incorrectly resulting in incorrectly rotated live view images; let us know if you still see this happening

- Improved some iOS and Sony Wifi camera connection issues

Power Consumption/Battery Life Improvements

We’ve been doing some work profiling the power consumption of Arsenal for various tasks. Through some software changes, we’re able to draw less power and therefore extend the battery life. This release contains a few improvements for the battery life of Arsenal, especially around handheld mode.

New Camera Support

We added support for the Nikon Z50, Sony A6100, and Sony A6600. Our internal testing has gone well, and we’ll be sending to BETA with the new release. If that goes smoothly, we’ll officially support these models with the next release. If they need much more work, we’ll continue with the release and hold off on the new cameras (so as not to delay the other new features).

The Sony A7RIV is on my desk. We’re working through one last thing to get it working with Arsenal, but we didn’t want to hold up the release, so look for it in a follow up release hopefully soon.

Release Date

We don’t have an exact release date yet as we finalize some internal testing and then go to BETA. That said,we’re targeting the week of February 24. We’ll announce the release once it’s available on our social channels, and we’ll publish full release notes as well. You can see an early draft of the release notes here.

Behind the Scenes Improvements

When you’re a startup launching a new product, there’s always more than can be built, more that can be tested. Setting your product up for regular releases months and years down the line often is a lower priority at launch, as companies focus on the highest value features that customers care about. If the product goes well, then the company spends time improving those behind the scenes software and processes so it can more quickly deliver new releases.

The past few months the team and I have been focusing on two areas in particular that are along these lines. Firmware updates allow us to continue improving Arsenal after it’s out the door, and the hardware team did a great job making sure we could continue adding new improvements without the hardware slowing us down. These “under the hood” changes should dramatically improve the speed at which we can get new features out the door, as well as increase the speed, stability, and overall performance of Arsenal.

NOTE: From here on in it’s going to get a bit technical, but hopefully it will be an interesting look behind the scenes of building a product like Arsenal.

Automated Testing and The Banana Stand

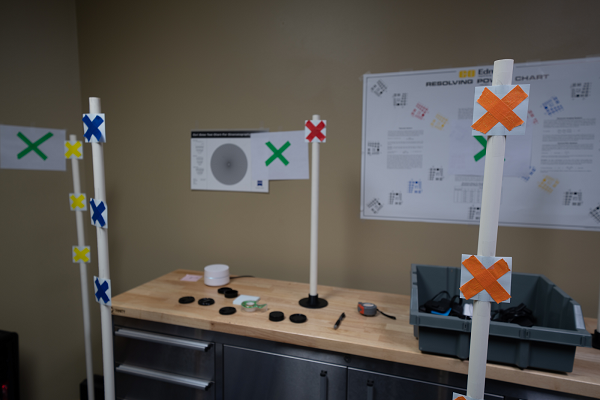

Arsenal interfaces with over 100 camera models. When we do a new release, we have to have our (relatively small) quality assurance team run a full set of checks on every camera. This is a long, manual process, and in the past this could require a month or more to check all cameras. After we fix any issues, there’s some back and forth and more testing before a release can go out. This workflow is standard in the hardware world, and the reason you rarely see large firmware updates on cameras (who only have to test with a single camera!). To speed up Arsenal releases, we’ve been working on something we call “The Banana Stand” (an Arrested Development reference).

The Banana Stand is a rack where we have every camera supported by Arsenal, with dedicated power for the camera and Arsenal. We wrote test scripts that automatically trigger each Arsenal/camera combination as you’ll see in the above video.

After a build, each Arsenal on the Banana Stand gets updated with the firmware being tested. The team and I have been writing integration tests that simulate how a user would interact with Arsenal. The code also confirms that Arsenal produces the expected behavior on the camera. When we build new features, or run into bugs, the first thing we do is write a test. This helps us ensure Arsenal is performing as expected on all of our supported cameras. [Side note: the Banana Stand was something I wanted to do from day one, but it was a pretty serious under-taking involving a lot of time to get everything up and running. Sometimes with startups you have to choose between doing everything and shipping something.]

Failed tests can be quickly seen by anyone on the team and fixed. Updates will get run through the build system and Banana Stand anytime code is pushed. This is especially helpful since our development team is remote and traditionally not everyone has had physical access to every camera.

The Banana Stand Hardware

The Banana Stand is built from prefab steel rails and mostly off the shelf parts. The rails can be moved up and down to make room for larger cameras. We found some cheap (but surprisingly good) autofocusing 50mm lenses to use. The cameras are pointed at a set of test charts, and in the future, we might get an HDR TV to test a few things like motion estimation and subject analysis. Phillip, our electrical engineer, did a great job keeping cables clean and putting everything together.

USBs on the Banana Stand

Getting the Banana Stand working required solving a few challenges. All Arsenals on the stand connect to a test runner machine via USB. While USB technically supports 128 devices, we have yet to see a USB chipset that can actually support this many devices. Also, USB is not designed to gracefully handle hitting a bandwidth limit.

To solve these issues, we used a thunderbolt PCIe enclosure on the host machine. We found some PCIe USB cards with 4 root hub chips per card. Two of these gave us effectively 8 different USB pathways, requiring only 12 devices per hub. We originally considered controlling Arsenals and doing firmware updates over Wi-Fi, but we figured the available bandwidth would be an issue and we didn’t want to slow down our office internet.

Manual Camera Controls on the Banana Stand

Another challenge with writing tests is the cameras can be in a lot of different states. We can control a lot of those states programmatically, but on cameras with a mode dial, the dial usually needs to be physically moved. To accomplish this, we flag tests with the mode they need and tests are run in groups, with a “block” step between. During the block step, someone from the team has the job of going and changing every camera to the associated mode. We briefly discussed trying to automate changing the mode dial with some sort of motor. This would probably be more work than it's worth though, so for the time being we’ve decided to stick to the changing modes manually.

The Banana Stand Tests

While we’re still adding to the test suite, I’m really excited to have coverage on some of our more involved features. To test things like focus calibration and focus stacking, we have colored focus targets at various distances. There are tests that automatically find the location of a focus target by color in the frame and focus on it. Then the code can check the the focus shifted from other charts as expected.

For other tests, we check the metadata of taken photos to ensure changes happen when expected. We’ve also been adding a set of performance tests to see how long different operations take on different cameras.

Ultimately this is a huge improvement on the breadth, depth, and speed of testing Arsenal. We will still be doing lots of field testing, as some features or conditions we can’t replicate in our office. And of course we will continue to send builds to our BETA teams before general release. For Arsenal users, the end result of faster build processes, better quality, and higher performance will really be exciting.

The New Build System

Arsenal Components

A lot goes into getting a firmware release out the door, and recently we’ve been spending a lot of time developing a new “build system” so we can complete releases a lot faster. As a little background, Arsenal’s software consists of a few high-level pieces, all of which need to be built and tested before a release can go out the door.

The firmware/firmware build system

- This is a set of tooling that compiles a custom Linux setup that runs on Arsenal’s processor. This includes a full Linux build from kernel, drivers, and our own device code. Some of the on-device software is compiled on a dedicated Arsenal (that sits in our office). While it is possible to “cross compile” software, compiling directly on the device has a few advantages for us. First, it's easier to get setup, and 2nd we can be sure all processor specific optimizations are getting compiled in.

The device software

- Most of the code the Arsenal team writes day to day is what we call “device code.” This is the software that runs on the Arsenal and does things like communicate with the cameras/phones, process images, run timelapses, calculate settings, etc. Our mobile apps are fairly small code bases that mostly interface to the device code. This prevents us from needing to write things twice and makes it possible to do things like run handheld mode without a phone connected.

The mobile apps

- Currently Android and iOS are two seperate codebases. While there are ways to build an iOS and Android app with the same codebase, at the time we started building Arsenal (5 years ago now) none of these were ready for prime time (this is something we may revisit in the future though). The mobile apps are fairly small, but do a bit of image processing and have some complexities around showing lots of remote images quickly.

The machine learning stack

- There’s a good chunk of code that doesn’t end up on Arsenal or in the apps, but is used to train our machine learning models. This code builds a few different model files, which then get copied into the device software.

There’s a few other assorted pieces, and needless to say, doing a full build of everything has previously been a fairly time consuming manual process. Any change on one part could require changes in the rest of the system. Builds were currently done by me (Ryan), mostly by hand. While this is not uncommon for companies to manage builds by hand this way, from day one, I’ve wanted to have a fully automated build system (to take it off my plate, to improve our turnaround time on new code, and to ensure all changes were tested together with associated changes in other parts of the system).

Announcing The New Build System

Thanks to the team’s hard work, the new build system is in place. We decided to use git submodules to manage each of the 15 different repositories (git submodules have come a long way since the early days). Submodules let us ensure any change in any part of the system can be tracked with associated changes across repository boundaries.

We built our new build system on top of buildkite.com. So far I’ve been very impressed. Their build agent lets us run code on various hardware and create pipelines that trigger when a previous step is complete.

Our new build pipeline looks like this:

- A github pull request is created on our main repo

- The changes are checked out on our firmware build machine and it starts a firmware build on a dedicated Arsenal. This includes steps for copying up machine learning models, device code, support packages, etc..

- After the firmware is built on the device, it is copied into a firmware image, which is then uploaded to our firmware server (Amazon S3).

- Next the firmware is uploaded to our test system and our integration tests run (see below).

- If the integration tests pass, our “app build machine” (a dedicated Mac laptop) pulls in the changes and builds an iOS and Android version of the app, and uploads them to TestFlight and the Android testing track, for QA to test.

- Finally, once QA approves the build (can take a while), the apps are manually moved to beta, where our beta testers can test on it. QA and Customer Support correlate the feedback from each build and if things look good, we do a production release.

Buildkite does a great job of showing us the status every step of the way. The build statuses get placed back on the github pull request. This type of build system makes it easier for anyone on the team to make changes to other parts of the system without fear of breaking something.

While this is very much a ‘behind the scenes’ improvement, it will translate into faster, more high quality releases for Arsenal users.

Other Improvements We’re Working On

Since Arsenal was first shipped, we’ve had a few software updates that improved performance. Now though, we’re in the middle of two projects that will provide much larger performance gains. Based on feedback, we’re addressing our two main bottlenecks right now:

Arsenal Connection

- A lot of setup happens when you connect to Arsenal. In addition to the network, there is a lot of state and event listeners that get registered and quite a bit of initial state that has to get sent down. We’ve been reworking things to speed up the initial connection. This was an area of the code base that was getting quite crufty and we did a full refactor on it. It’s now much better code, should be more resilient, and is easier to write tests against.

Camera Interfacing

- As previously stated, Arsenal works with over 100 cameras. In a perfect world, each camera would speak the same language and we could write code once to talk to every camera. While there is a standard for communicating with cameras (PTP, aka Picture Transfer Protocol), the reality is most cameras don’t even come close to following the spec. To get Arsenal out the door faster we originally used an open source library to manage communicating with the cameras and abstracting away some of the camera specific complexity.

As time has gone on though, a lot of those abstractions have gotten in the way of performance. To improve performance, we decided we needed to start fresh. One person on the team spent about three months getting the initial prototype working, and now that the Banana Stand is up, we’re starting the process of moving cameras over to the new system. By removing some of the abstraction (and moving some of it into the Arsenal codebase itself), we’ve been able to make some big improvements to camera interaction speed and the related user experience.

It's still a little too early to say how long the migration to the new system will take, but we’re going to work hard to get everything migrated quickly. The other big benefit is the new system will let us add new cameras much much quicker. Assuming the cameras have the necessary API endpoints we need, adding new cameras will become a fairly fast operation.

If you made it this far, get yourself a nice drink because you deserve it!

There’s a lot in the works for future Arsenal releases. This current release is the first that's starting to reap the benefits of some of the hard work the team has put in during the last few months. Thanks to the team for all of the hard work.

As always, let us know if you have any questions about the update or if you need any assistance with your Arsenal (help@witharsenal.com). Thanks again for all of your support.

- Ryan